Code

import pandas as pd

df_telematics = pd.read_csv('data/Driver_Behavior.csv')

df_telematics_analysis = df_telematics.drop(columns=['behavior_label'])It was synthetically generated, taken from kaggle vehicle telemetry for driver behavior. Originally the dataset has a target label, but we are going to remove it and explore the data without those labels. The idea is to see if we can identify and clasify drivers behaviors based on their telemetry data.

duplicated rows: 0

<class 'pandas.DataFrame'>

RangeIndex: 30000 entries, 0 to 29999

Data columns (total 10 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 speed_kmph 30000 non-null float64

1 accel_x 30000 non-null float64

2 accel_y 30000 non-null float64

3 brake_pressure 30000 non-null float64

4 steering_angle 30000 non-null float64

5 throttle 30000 non-null float64

6 lane_deviation 30000 non-null float64

7 phone_usage 30000 non-null int64

8 headway_distance 30000 non-null float64

9 reaction_time 30000 non-null float64

dtypes: float64(9), int64(1)

memory usage: 2.3 MBNo duplicated values and everything is numeric, a good scenario for a PCA

import seaborn as sns

import matplotlib.pyplot as plt

plt.figure(figsize=(10, 8))

corr = df_telematics_analysis[[

"throttle",

"brake_pressure",

"accel_x",

"speed_kmph",

"accel_y",

"steering_angle",

"headway_distance",

"lane_deviation",

"reaction_time",

"phone_usage",

]].corr()

sns.heatmap(corr, annot=True, cmap='coolwarm', fmt=".2f")

plt.title("Correlation Matrix")

plt.show()

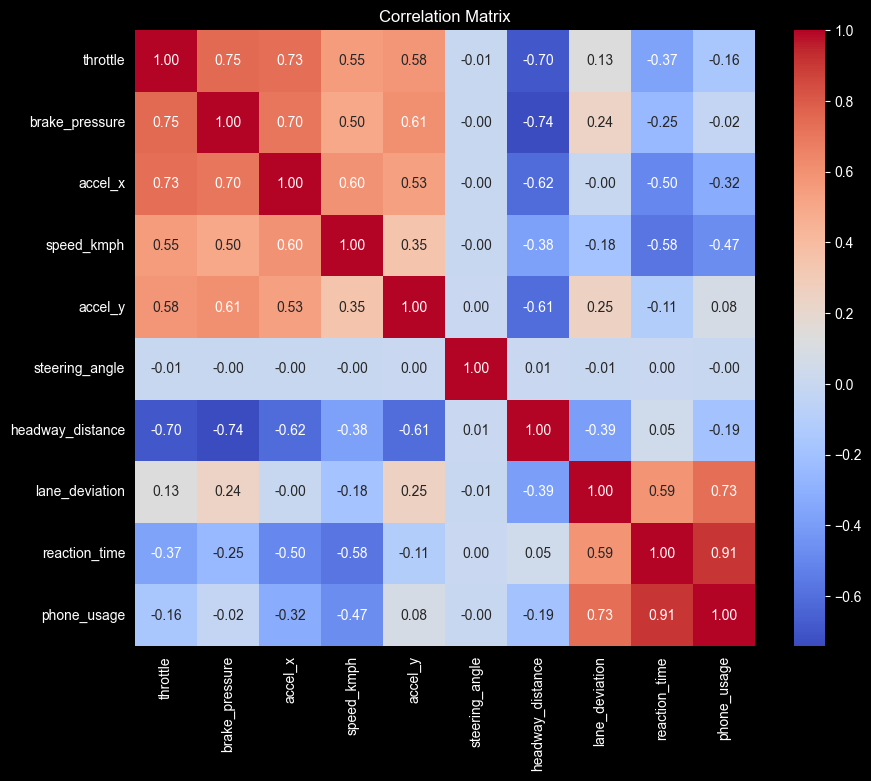

The steering_angle is truly independant, all correlations are very close to \(0\).

Correlation between phone_usage and reaction_time if very high at \(0.91\), suggest that the if the driver is on their phone, their reaction time will be slow, and if the reaction time is slow, it’s very likely that the driver was on their phone.

aggression_vars = [

'throttle',

'brake_pressure',

'accel_x',

'speed_kmph',

'accel_y',

]

aggression_corr = df_telematics[aggression_vars].corr()

plt.figure(figsize=(6, 5))

sns.heatmap(

aggression_corr,

annot=True,

cmap='Reds',

fmt=".2f",

linewidths=0.5

)

plt.title("Correlation: The Aggression Box")

plt.show()

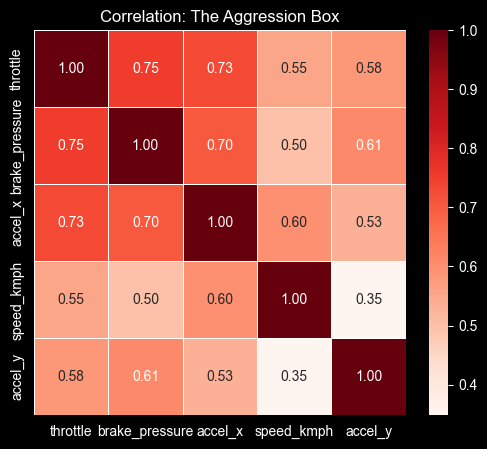

Looking at the correlation of accel_x, brake_pressure and throttle we can infer that the “Agressive drivers” they press the gas and jump straight to smashing the brake pedal without “coasting.” This seems like a classic signature of “tailgating,” or speeding up to something, then panic breaking.

The speed_kmph has a lower correlation, indicating that the Aggresion behavior is more about force, because probably we can find aggresive behavior not at high speeds.

The difference on the correlation values between accel_x and accel_y with the other variables, tell us that the aggresive behavior is likely to happen in a straight line reckless driving rather than a drift king cornering behavior.

ncomp

0 0.000000

1 0.427785

2 0.703020

3 0.803028

4 0.851606

5 0.893346

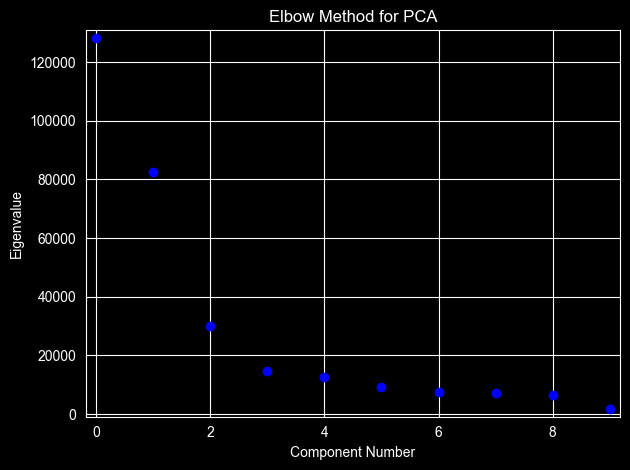

Name: rsquare, dtype: float64The elbow method suggests two components explain 70% of the variance, and three components explain 80%.

I think that three components are more adequate.

import plotly.io as pio

pio.renderers.default = "notebook_connected"

import plotly.express as px

df_vis = pca_3.factors.copy()

df_vis.columns = ['Aggression (PC1)', 'Distraction (PC2)', 'Steering (PC3)']

df_vis['Speed'] = df_telematics_analysis['speed_kmph']

df_vis['Phone'] = df_telematics_analysis['phone_usage']

df_vis['Brake'] = df_telematics_analysis['brake_pressure']

fig = px.scatter_3d(

df_vis,

x='Aggression (PC1)',

y='Distraction (PC2)',

z='Steering (PC3)',

color='Aggression (PC1)',

color_continuous_scale='Viridis',

opacity=0.7,

hover_data=['Speed', 'Phone', 'Brake'],

title="3D Driver Behavior Analysis"

)

fig.update_traces(marker=dict(size=3))

from pathlib import Path

out = Path("charts/interactive scatter.html")

fig.write_html(out, include_plotlyjs="cdn")

fig.show()import plotly.express as px

df_vis = df_telematics_analysis.copy()

df_vis['Aggression'] = pca_3.factors.iloc[:, 0]

df_vis['Distraction'] = pca_3.factors.iloc[:, 1]

fig = px.parallel_coordinates(

df_vis,

dimensions=['speed_kmph', 'brake_pressure', 'phone_usage', 'Aggression', 'Distraction'],

color="Aggression",

color_continuous_scale=px.colors.diverging.Tealrose,

title="How Raw Driving Data Flows into PCA Scores"

)

out = Path("charts/interactive flow chart.html")

fig.write_html(out, include_plotlyjs="cdn")

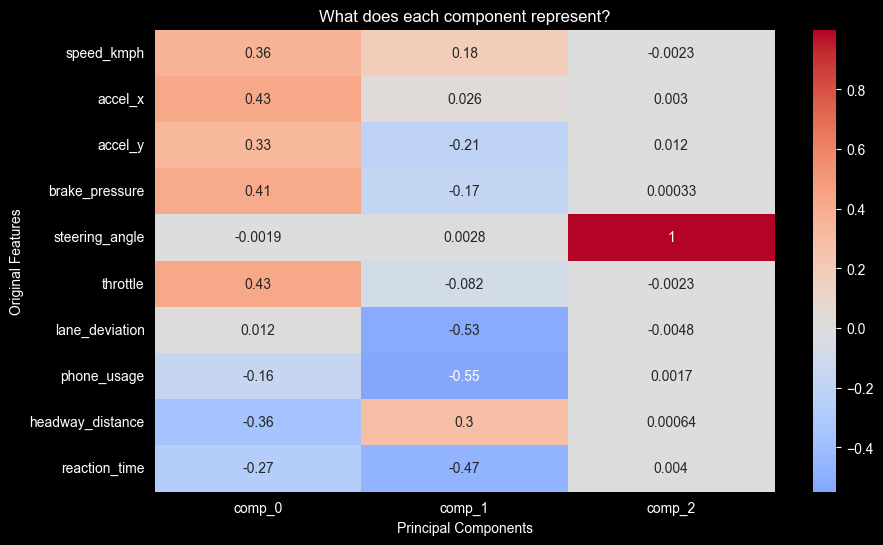

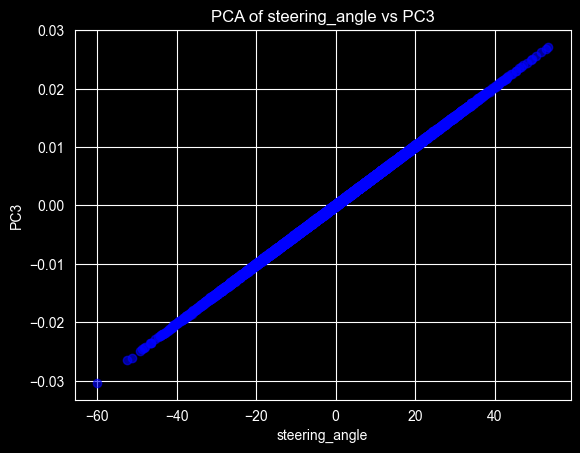

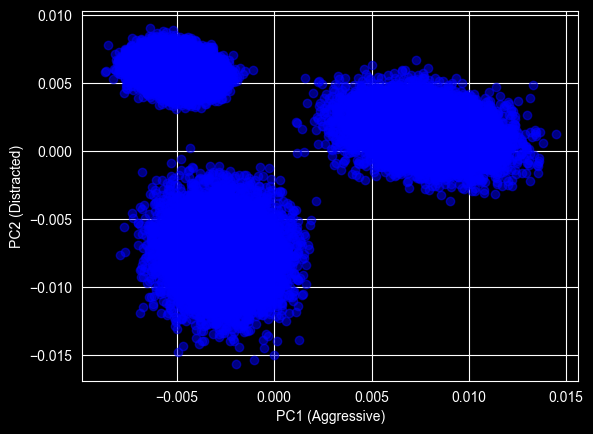

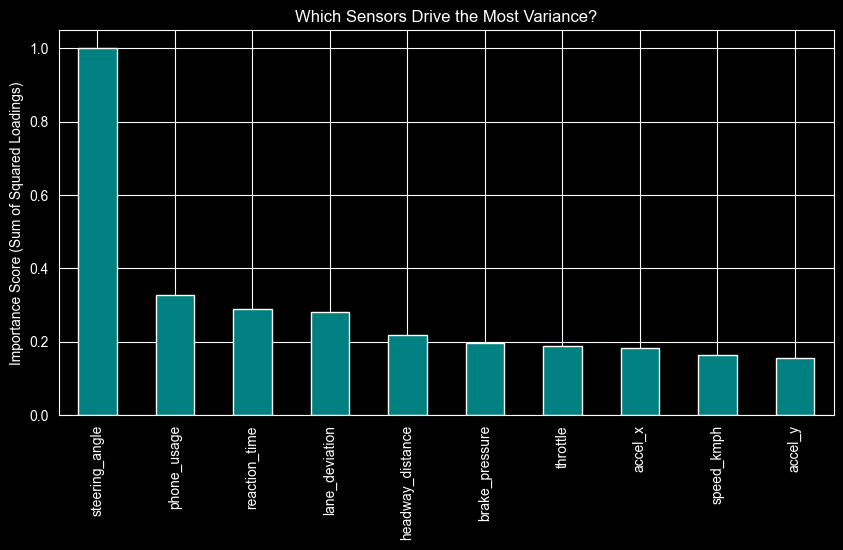

fig.show()with three components we can see a separation between those three. ## PC1 (Aggresive) highs: - throttle - brake_pressure - accel_x lows: - headway_distance ## PC2 (Distracted) highs: - lane_deviation - phone_usage - reaction_time ## PC3 (Chill) lows: - steering_angle

Why does the PC3 have the lowest steering_angle value?

The fact that we have a perfect line in the relationship between the steering_angle and the PC3, means that this is the only behavior that can be grouped on this component.

We assume that steering doesn’t define anything on the other two Principal Components, it could not be grouped on those components.

that represents the Aggressive drivers, they have higher values on the PC1. ## top left (High Distraction, Low Aggression) that represents the distracted drivers, they have higher values on the PC2. ## bottom left the Safe-Chill behavior, where they don’t have any high value on the PC1 or PC2 components.

if we can afford only 2 sensors for detecting distracted behavior, we should choose phone_usage and reaction_time. if we repeat the same exersice for detecting aggresive behavior, we should choose breal_pressure and throttle.

For this part we are going to do create a pipeline using scikit-learn

from sklearn.pipeline import Pipeline

from sklearn.preprocessing import StandardScaler

from sklearn.decomposition import PCA

from sklearn.cluster import KMeans

seed = 42

X = df_telematics.drop(columns=['behavior_label'])

y = df_telematics['behavior_label']

pipeline = Pipeline([

('scaler', StandardScaler()),

('pca', PCA(n_components=3)),

('clusterer', KMeans(n_clusters=3, random_state=seed))

])

pipeline.fit(X)

y_pred_clusters = pipeline.predict(X)Adjusted Rand Score: 1.00

Homogeneity Score: 1.00We achieved an Adjusted Rand Score of \(1.00\). This indicates a perfect alignment between our unsupervised cluster and the ground truth.

It confirms that the driver behaviors (Safe, Aggressive and Distracted) are linearly separable in the PCA space, and our pipeline can autonomously indentify these profiles.